Operating system on Rust. Page memory: advanced level

This article explains how the operating system kernel gets access to physical memory frames. We study the function for converting virtual addresses into physical ones. We will also figure out how to create new mappings in the page tables.

This blog is posted on github . If you have any questions or problems, open the corresponding ticket there. All source for the article here .

From the previous article, we learned about the principles of paging memory and how four-level page tables work on x86_64. We also found that the loader has already configured a hierarchy of page tables for our kernel, so the kernel works on virtual addresses. This increases security, but the problem arises: how to access the real physical addresses that are stored in the page table entries or the

In the first section of the article we will discuss the problem and different approaches to its solution. Then we implement a function that sneaks through a hierarchy of page tables to convert virtual addresses into physical ones. Finally, let's learn how to create new mappings in the page tables and find unused memory frames to create new tables.

To work, you need

Access to the page tables from the kernel is not as simple as it may seem. To understand the problem, take another look at the four-level hierarchy of tables from the previous article:

It is important that each page entry stores the physical address of the following table. This avoids the translation of these addresses, which reduces performance and easily leads to endless loops.

The problem is that we cannot directly access the physical addresses from the kernel, since it also works on virtual addresses. For example, when we refer to the address

Therefore, to access the frames of the page tables, you need to map certain virtual pages with these frames. There are various ways to create such comparisons.

1. A simple solution is the identical mapping of all the page tables .

In this example, we see the same frame mapping. The physical addresses of the page tables are simultaneously valid virtual addresses, so that we can easily access the tables of pages of all levels, starting with register CR3.

However, this approach clutters the virtual address space and makes it difficult to find large contiguous areas of free memory. Let's say we want to create a 1000 KiB virtual memory area in the figure above, for example, to display a file in memory . We cannot start from the

In addition, the creation of new page tables is much more complicated, since we need to find physical frames whose corresponding pages are not yet used. For example, we reserved a 1000 KiB of virtual memory for our file, starting at address

2. Another option is to translate the page tables only temporarily when you need to access them. Temporary comparisons require the identical mapping of only the first level table:

In this figure, the level 1 table manages the first 2 MIBs of the virtual address space. This is possible because access is made from the CR3 register through zero entries in the tables of levels 4, 3 and 2. The entry with index 8 translates the virtual page at

By writing to the identically mapped table of level 1, our kernel can create up to 511 time comparisons (512 minus the record needed for the identical mapping). In the given example, the kernel associated the zero entry of the table of level 1 with the frame at address

Thus, access to an arbitrary frame of the page table with temporary comparisons consists of the following actions:

With this approach, the virtual address space remains clean, since the same 512 virtual pages are constantly used. The disadvantage is some cumbersome, especially since the new mapping may require changing several levels of the table, that is, we need to repeat the described process several times.

3. Although both of the above approaches work, there is a third method: recursive page tables . It combines the advantages of both approaches: it constantly matches all the frames of the page tables, without requiring temporary comparisons, and also keeps a number of matched pages, avoiding the fragmentation of the virtual address space. This is the method we will use.

The idea is to translate some of the records from the table of the fourth level into itself. Thus, we actually reserve a portion of the virtual address space and match all current and future table frames with this space.

Consider an example to see how this all works:

The only difference from the example at the beginning of the article is an additional entry with the index

When the CPU follows this record, it does not refer to the level 3 table, but again refers to the level 4 table. This is similar to a recursive function that calls itself. It is important that the processor assumes that each entry in a table of level 4 points to a table of level 3, so now it treats a table of level 4 as a table of level 3. This works because the tables of all levels in x86_64 have the same structure.

By following the recursive notation one or more times before starting the actual conversion, we can effectively reduce the number of levels the processor goes through. For example, if we follow the recursive notation once, and then go to the level 3 table, the processor thinks that the level 3 table is a level 2 table. Going further, it views the level 2 table as a level 1 table, and a level 1 table as mapped frame in physical memory. This means that we can now read and write to the Tier 1 page table, because the processor thinks it is a mapped frame. The figure below shows the five steps of such a broadcast:

Similarly, we can follow the recursive notation twice before starting the conversion, in order to reduce the number of levels passed to two:

Let's go through this procedure step by step. First, the CPU follows the recursive notation in the table of level 4 and thinks it has reached the table of level 3. Then again follows the recursive notation and thinks that it has reached level 2. But in fact it is still at level 4. Then the CPU goes to a new address and enters the table of level 3, but thinks that it is already in the table of level 1. Finally, at the next entry point in the table of level 2, the processor thinks that it turned to the frame of physical memory. This allows us to read and write to table level 2.

It also accesses the tables of levels 3 and 4. To access the table of level 3, we follow the recursive notation three times: the processor thinks that it is already in the table of level 1, and in the next step we reach level 3, which the CPU considers as a mapped frame. To access the level 4 table itself, we simply follow the recursive notation four times until the processor treats the level 4 table itself as the displayed frame (in blue in the figure below).

The concept is difficult to understand at first, but in practice it works quite well.

So, we can access the tables of all levels by following the recursive notation one or more times. Since the indices in the tables of four levels are derived directly from the virtual address, for this method you need to create special virtual addresses. As we remember, the indexes of the page table are extracted from the address as follows:

Suppose we want to access a level 1 table that displays a specific page. As we learned above, you need to go through the recursive notation once, and then by the indices of the 4th, 3rd and 2nd levels. To do this, we move all blocks of addresses one block to the right and set the index of the recursive record to the place of the initial level 4 index:

To access the table of level 2 of this page, we move all blocks of indices two blocks to the right and set the recursive index to the place of both source blocks: level 4 and level 3:

To access the table of level 3, we do the same, just moving three blocks of addresses to the right.

Finally, to access the level 4 table, move everything four blocks to the right.

Now you can calculate virtual addresses for page tables of all four levels. We can even calculate an address that points precisely to a specific page table entry by multiplying its index by 8, the size of the page table entry.

The table below shows the structure of addresses for accessing different types of frames:

Here

The addresses are octal , because each octal character represents three bits, which allows you to clearly separate the 9-bit indexes of tables of different levels. This is not possible in hexadecimal, where each character represents four bits.

After all this theory, we can finally begin to implement. Conveniently, the loader generated not only the page tables, but also the recursive display in the last entry of the level 4 table. The loader did this because otherwise there would be a chicken or egg problem: we need to access the level 4 table to create a recursive display but we cannot access it without any display.

We have already used this recursive mapping at the end of the previous article to access the level 4 table through the

Thanks to our knowledge of recursive tables, we can now create virtual addresses to access all active tables. And make the broadcast function.

As a first step, we will create a function that converts a virtual address into a physical one, passing through the hierarchy of the page tables:

First, we introduce variables for the recursive index (511 =

The next step is to calculate the virtual addresses of the four page tables, as described in the previous section. Next, we convert each of these addresses into

After calculating the address, we use the indexing operator to view the record in the table of level 4. If this record is zero, then there is no table of level 3 for this record of level 4. This means that

After checking the three pages of a higher level, we can finally read the entry in the table of level 1, which tells us the physical frame with which the address is associated. As a last step, add the page offset to it - and return the address.

If we knew for sure that the address was mapped, then we could directly access the table of level 1, without looking at the pages of a higher level. But since we do not know this, we must first check whether the table of level 1 exists, otherwise our function will return a page missing error for unmatched addresses.

Let's try to use the translation function for virtual addresses in our

After launch we see the following result:

As expected, the address associated with the identifier 0xb8000 is converted to the same physical address. The code page and the stack page are converted to some arbitrary physical addresses, which depend on how the loader created the initial mapping for our kernel.

x86_64 provides the

The

Our

Now, instead of passing

This function gives the same result as the hand-written original translation function.

The block

The problem is that we do not immediately see which parts are unsafe. For example, without looking at the definition of a function,

To avoid this problem, you can add a safe built-in function:

Now the block is

When we read the page tables and created the transform function, the next step is to create a new mapping in the page table hierarchy.

The complexity of this operation depends on the virtual page that we want to display. In the simplest case, a level-1 page table already exists for this page, and we just need to make one entry. In the most difficult case, the page is in a memory area for which level 3 does not exist yet, so you must first create new tables of level 3, level 2 and level 1

Let's start with a simple case when you do not need to create new tables. The loader is loaded into the first megabyte of the virtual address space, so we know that there is a valid level 1 table for this region. For our example, we can choose any unused page in this memory area, for example, the page at the address

We implement it in a new function

The function accepts a variable reference to

In addition to the arguments

The fourth argument must be some structure that implements the treit

The function

Since we know that the address

(If the error 'method

Now we can test the new translation function:

First we create a mapping for the page by address

Then we write down the value in this page

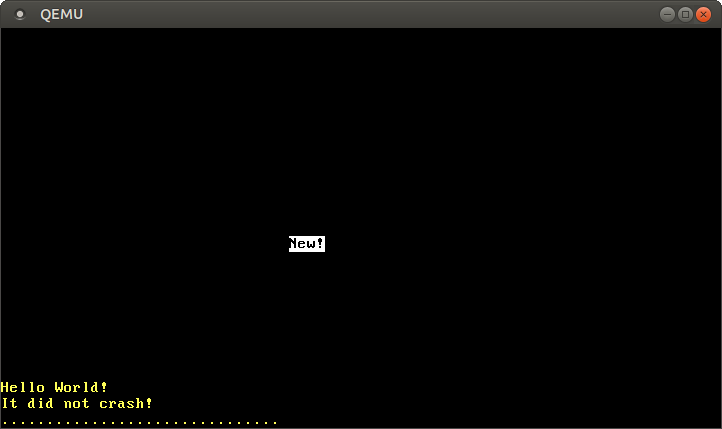

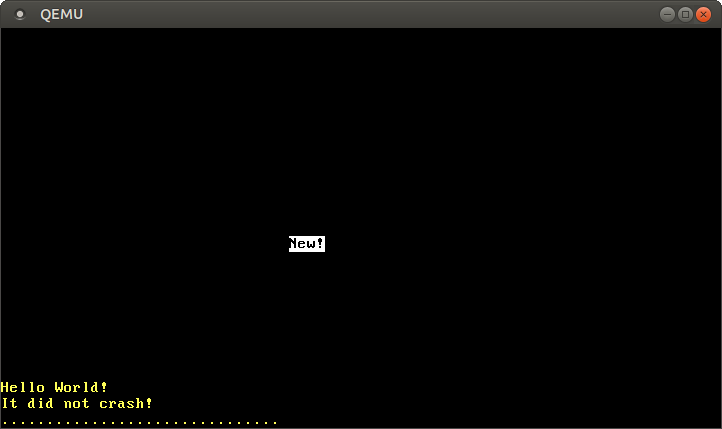

When we run it in QEMU, we see this:

The inscription on the screen.

The code worked because there was already a level 1 table to display the page

When you start it starts panicking with the following error message:

To display pages that do not yet have a Level 1 page table, you need to create the correct one

On different computers, different amounts of physical memory and different areas reserved by devices, such as VGA. Only the BIOS or UEFI firmware knows exactly which memory areas can be used and which are reserved. Both firmware standards provide functions for obtaining a memory map, but they can only be called at the very beginning of the download. Therefore, our bootloader has already requested this (and other) information from the BIOS.

To pass information to the OS kernel, the loader as an argument when calling a function

The structure is

Before using the field

Macro

Because it

To verify the signature, the crate

For the entry point, you no longer need to use

Notice that we are now sending to a

Now, thanks to the information from the BIOS, we have access to the memory map, so we can make a normal frame allocator. Let's start with a common skeleton:

The field is

Initialization

This function using the combinator converts the original memory map into an iterator of the physical frames used:

Now we can change our function

Now the address translation was successful - and we again see the black and white message “New!” On the screen . Behind the scenes, the method

Although our function

In this article, you learned how to use a recursive level 4 table to translate all frames into computable virtual addresses. We used this method to implement the address translation function and create a new mapping in the page tables.

We saw that creating new mappings requires unused frames for new tables. Such a frame allocator can be implemented based on information from the BIOS, which the loader sends to our kernel.

In the next article, we will create a heap memory for our kernel, which will allow us to allocate memory and use different types of collections .

This blog is posted on github . If you have any questions or problems, open the corresponding ticket there. All source for the article here .

Introduction

From the previous article, we learned about the principles of paging memory and how four-level page tables work on x86_64. We also found that the loader has already configured a hierarchy of page tables for our kernel, so the kernel works on virtual addresses. This increases security, but the problem arises: how to access the real physical addresses that are stored in the page table entries or the

CR3 ?In the first section of the article we will discuss the problem and different approaches to its solution. Then we implement a function that sneaks through a hierarchy of page tables to convert virtual addresses into physical ones. Finally, let's learn how to create new mappings in the page tables and find unused memory frames to create new tables.

Dependency updates

To work, you need

x86_64 version 0.4.0 or later. Update dependencies in our Cargo.toml : [dependencies] x86_64 = "0.4.0" # or later Access to page tables

Access to the page tables from the kernel is not as simple as it may seem. To understand the problem, take another look at the four-level hierarchy of tables from the previous article:

It is important that each page entry stores the physical address of the following table. This avoids the translation of these addresses, which reduces performance and easily leads to endless loops.

The problem is that we cannot directly access the physical addresses from the kernel, since it also works on virtual addresses. For example, when we refer to the address

4 KiB , we get access to the virtual address 4 KiB , and not to the physical address where the table of the 4th level pages is stored. If we want to access the physical address of 4 KiB , then we need to use a virtual address that is translated into it.Therefore, to access the frames of the page tables, you need to map certain virtual pages with these frames. There are various ways to create such comparisons.

1. A simple solution is the identical mapping of all the page tables .

In this example, we see the same frame mapping. The physical addresses of the page tables are simultaneously valid virtual addresses, so that we can easily access the tables of pages of all levels, starting with register CR3.

However, this approach clutters the virtual address space and makes it difficult to find large contiguous areas of free memory. Let's say we want to create a 1000 KiB virtual memory area in the figure above, for example, to display a file in memory . We cannot start from the

28 KiB region, because it will rest on the already occupied page at 1004 KiB . Therefore, we will have to look further until we find a suitable large fragment, for example, with 1008 KiB . The same problem of fragmentation arises as in segmented memory.In addition, the creation of new page tables is much more complicated, since we need to find physical frames whose corresponding pages are not yet used. For example, we reserved a 1000 KiB of virtual memory for our file, starting at address

1008 KiB . Now we can no longer use any frame with a physical address between 1000 KiB and 2008 KiB , because it cannot be identically displayed.2. Another option is to translate the page tables only temporarily when you need to access them. Temporary comparisons require the identical mapping of only the first level table:

In this figure, the level 1 table manages the first 2 MIBs of the virtual address space. This is possible because access is made from the CR3 register through zero entries in the tables of levels 4, 3 and 2. The entry with index 8 translates the virtual page at

32 KiB into the physical frame at 32 KiB , thereby identically displaying the level 1 table itself. The figure shows this with a horizontal arrow.By writing to the identically mapped table of level 1, our kernel can create up to 511 time comparisons (512 minus the record needed for the identical mapping). In the given example, the kernel associated the zero entry of the table of level 1 with the frame at address

24 KiB . This created a temporary mapping of the virtual page at 0 KiB address 0 KiB with the physical frame of the level 2 page table, indicated by the dotted arrow. Now the kernel can access the level 2 table by writing to a page that starts at 0 KiB .Thus, access to an arbitrary frame of the page table with temporary comparisons consists of the following actions:

- Find a free entry in the identically mapped level 1 table.

- Match this entry with the physical frame of the page table to which we want to access.

- Access this frame through a virtual page associated with the entry.

- Set the record back to unused, thereby removing the temporary mapping.

With this approach, the virtual address space remains clean, since the same 512 virtual pages are constantly used. The disadvantage is some cumbersome, especially since the new mapping may require changing several levels of the table, that is, we need to repeat the described process several times.

3. Although both of the above approaches work, there is a third method: recursive page tables . It combines the advantages of both approaches: it constantly matches all the frames of the page tables, without requiring temporary comparisons, and also keeps a number of matched pages, avoiding the fragmentation of the virtual address space. This is the method we will use.

Recursive page tables

The idea is to translate some of the records from the table of the fourth level into itself. Thus, we actually reserve a portion of the virtual address space and match all current and future table frames with this space.

Consider an example to see how this all works:

The only difference from the example at the beginning of the article is an additional entry with the index

511 in the table of level 4, which is compared with the physical frame 4 KiB , which is in the table itself.When the CPU follows this record, it does not refer to the level 3 table, but again refers to the level 4 table. This is similar to a recursive function that calls itself. It is important that the processor assumes that each entry in a table of level 4 points to a table of level 3, so now it treats a table of level 4 as a table of level 3. This works because the tables of all levels in x86_64 have the same structure.

By following the recursive notation one or more times before starting the actual conversion, we can effectively reduce the number of levels the processor goes through. For example, if we follow the recursive notation once, and then go to the level 3 table, the processor thinks that the level 3 table is a level 2 table. Going further, it views the level 2 table as a level 1 table, and a level 1 table as mapped frame in physical memory. This means that we can now read and write to the Tier 1 page table, because the processor thinks it is a mapped frame. The figure below shows the five steps of such a broadcast:

Similarly, we can follow the recursive notation twice before starting the conversion, in order to reduce the number of levels passed to two:

Let's go through this procedure step by step. First, the CPU follows the recursive notation in the table of level 4 and thinks it has reached the table of level 3. Then again follows the recursive notation and thinks that it has reached level 2. But in fact it is still at level 4. Then the CPU goes to a new address and enters the table of level 3, but thinks that it is already in the table of level 1. Finally, at the next entry point in the table of level 2, the processor thinks that it turned to the frame of physical memory. This allows us to read and write to table level 2.

It also accesses the tables of levels 3 and 4. To access the table of level 3, we follow the recursive notation three times: the processor thinks that it is already in the table of level 1, and in the next step we reach level 3, which the CPU considers as a mapped frame. To access the level 4 table itself, we simply follow the recursive notation four times until the processor treats the level 4 table itself as the displayed frame (in blue in the figure below).

The concept is difficult to understand at first, but in practice it works quite well.

Address calculation

So, we can access the tables of all levels by following the recursive notation one or more times. Since the indices in the tables of four levels are derived directly from the virtual address, for this method you need to create special virtual addresses. As we remember, the indexes of the page table are extracted from the address as follows:

Suppose we want to access a level 1 table that displays a specific page. As we learned above, you need to go through the recursive notation once, and then by the indices of the 4th, 3rd and 2nd levels. To do this, we move all blocks of addresses one block to the right and set the index of the recursive record to the place of the initial level 4 index:

To access the table of level 2 of this page, we move all blocks of indices two blocks to the right and set the recursive index to the place of both source blocks: level 4 and level 3:

To access the table of level 3, we do the same, just moving three blocks of addresses to the right.

Finally, to access the level 4 table, move everything four blocks to the right.

Now you can calculate virtual addresses for page tables of all four levels. We can even calculate an address that points precisely to a specific page table entry by multiplying its index by 8, the size of the page table entry.

The table below shows the structure of addresses for accessing different types of frames:

| Virtual address for | Address structure ( octal ) |

|---|---|

| Page | 0o_SSSSSS_AAA_BBB_CCC_DDD_EEEE |

| Entry in table level 1 | 0o_SSSSSS_RRR_AAA_BBB_CCC_DDDD |

| Entry in table level 2 | 0o_SSSSSS_RRR_RRR_AAA_BBB_CCCC |

| Entry in table level 3 | 0o_SSSSSS_RRR_RRR_RRR_AAA_BBBB |

| Entry in table level 4 | 0o_SSSSSS_RRR_RRR_RRR_RRR_AAAA |

Here

ААА is a level 4 index, ВВВ is level 3, ССС is level 2, and DDD is level 1 index for the displayed frame, EEEE is its offset. RRR is the index of the recursive entry. The index (three digits) is converted to an offset (four digits) by multiplying by 8 (the size of the page table entry). With this offset, the resulting address directly points to the corresponding page table entry.SSSS are the bits of the sign bit extension, that is, they are all copies of bit 47. This is a special requirement for valid addresses in the x86_64 architecture, which we discussed in the previous article .The addresses are octal , because each octal character represents three bits, which allows you to clearly separate the 9-bit indexes of tables of different levels. This is not possible in hexadecimal, where each character represents four bits.

Implementation

After all this theory, we can finally begin to implement. Conveniently, the loader generated not only the page tables, but also the recursive display in the last entry of the level 4 table. The loader did this because otherwise there would be a chicken or egg problem: we need to access the level 4 table to create a recursive display but we cannot access it without any display.

We have already used this recursive mapping at the end of the previous article to access the level 4 table through the

0xffff_ffff_ffff_f000 address 0xffff_ffff_ffff_f000 . If we convert this address to octal and compare it with the table above, we see that it corresponds exactly to the structure of the entry of table level 4 with RRR = 0o777 , AAAA = 0 and the bits of the character expansion 1 :structure: 0o_SSSSSS_RRR_RRR_RRR_RRR_AAAA Address: 0o_177777_777_777_777_777_0000

Thanks to our knowledge of recursive tables, we can now create virtual addresses to access all active tables. And make the broadcast function.

Address Translation

As a first step, we will create a function that converts a virtual address into a physical one, passing through the hierarchy of the page tables:

// in src/lib.rs pub mod memory; // in src/memory.rs use x86_64::PhysAddr; use x86_64::structures::paging::PageTable; /// Returns the physical address for the given virtual address, or `None` if the /// virtual address is not mapped. pub fn translate_addr(addr: usize) -> Option<PhysAddr> { // introduce variables for the recursive index and the sign extension bits // TODO: Don't hardcode these values let r = 0o777; // recursive index let sign = 0o177777 << 48; // sign extension // retrieve the page table indices of the address that we want to translate let l4_idx = (addr >> 39) & 0o777; // level 4 index let l3_idx = (addr >> 30) & 0o777; // level 3 index let l2_idx = (addr >> 21) & 0o777; // level 2 index let l1_idx = (addr >> 12) & 0o777; // level 1 index let page_offset = addr & 0o7777; // calculate the table addresses let level_4_table_addr = sign | (r << 39) | (r << 30) | (r << 21) | (r << 12); let level_3_table_addr = sign | (r << 39) | (r << 30) | (r << 21) | (l4_idx << 12); let level_2_table_addr = sign | (r << 39) | (r << 30) | (l4_idx << 21) | (l3_idx << 12); let level_1_table_addr = sign | (r << 39) | (l4_idx << 30) | (l3_idx << 21) | (l2_idx << 12); // check that level 4 entry is mapped let level_4_table = unsafe { &*(level_4_table_addr as *const PageTable) }; if level_4_table[l4_idx].addr().is_null() { return None; } // check that level 3 entry is mapped let level_3_table = unsafe { &*(level_3_table_addr as *const PageTable) }; if level_3_table[l3_idx].addr().is_null() { return None; } // check that level 2 entry is mapped let level_2_table = unsafe { &*(level_2_table_addr as *const PageTable) }; if level_2_table[l2_idx].addr().is_null() { return None; } // check that level 1 entry is mapped and retrieve physical address from it let level_1_table = unsafe { &*(level_1_table_addr as *const PageTable) }; let phys_addr = level_1_table[l1_idx].addr(); if phys_addr.is_null() { return None; } Some(phys_addr + page_offset) } First, we introduce variables for the recursive index (511 =

0o777 ) and the sign extension bits (each is 1). Then we calculate the indexes of the page tables and the offset through bitwise operations, as indicated in the illustration:The next step is to calculate the virtual addresses of the four page tables, as described in the previous section. Next, we convert each of these addresses into

PageTable links PageTable . These are unsafe operations, since the compiler cannot know that these addresses are valid.After calculating the address, we use the indexing operator to view the record in the table of level 4. If this record is zero, then there is no table of level 3 for this record of level 4. This means that

addr not associated with any physical memory. So we return None . Otherwise, we know that a level 3 table exists. Then we repeat the procedure as in the previous level.After checking the three pages of a higher level, we can finally read the entry in the table of level 1, which tells us the physical frame with which the address is associated. As a last step, add the page offset to it - and return the address.

If we knew for sure that the address was mapped, then we could directly access the table of level 1, without looking at the pages of a higher level. But since we do not know this, we must first check whether the table of level 1 exists, otherwise our function will return a page missing error for unmatched addresses.

We try

Let's try to use the translation function for virtual addresses in our

_start function: // in src/main.rs #[cfg(not(test))] #[no_mangle] pub extern "C" fn _start() -> ! { […] // initialize GDT, IDT, PICS use blog_os::memory::translate_addr; // the identity-mapped vga buffer page println!("0xb8000 -> {:?}", translate_addr(0xb8000)); // some code page println!("0x20010a -> {:?}", translate_addr(0x20010a)); // some stack page println!("0x57ac001ffe48 -> {:?}", translate_addr(0x57ac001ffe48)); println!("It did not crash!"); blog_os::hlt_loop(); } After launch we see the following result:

As expected, the address associated with the identifier 0xb8000 is converted to the same physical address. The code page and the stack page are converted to some arbitrary physical addresses, which depend on how the loader created the initial mapping for our kernel.

Тип RecursivePageTable

x86_64 provides the

RecursivePageTable type, which implements safe abstractions for various page table operations. With this type, you can much more concisely implement the translate_addr function: // in src/memory.rs use x86_64::structures::paging::{Mapper, Page, PageTable, RecursivePageTable}; use x86_64::{VirtAddr, PhysAddr}; /// Creates a RecursivePageTable instance from the level 4 address. /// /// This function is unsafe because it can break memory safety if an invalid /// address is passed. pub unsafe fn init(level_4_table_addr: usize) -> RecursivePageTable<'static> { let level_4_table_ptr = level_4_table_addr as *mut PageTable; let level_4_table = &mut *level_4_table_ptr; RecursivePageTable::new(level_4_table).unwrap() } /// Returns the physical address for the given virtual address, or `None` if /// the virtual address is not mapped. pub fn translate_addr(addr: u64, recursive_page_table: &RecursivePageTable) -> Option<PhysAddr> { let addr = VirtAddr::new(addr); let page: Page = Page::containing_address(addr); // perform the translation let frame = recursive_page_table.translate_page(page); frame.map(|frame| frame.start_address() + u64::from(addr.page_offset())) } The

RecursivePageTable type completely encapsulates an insecure crawl of page tables, so the unsafe code is no longer needed in the translate_addr function. The init function remains insecure due to the need to ensure the correctness of the passed level_4_table_addr .Our

_start function should be updated for the new function signature as follows: // in src/main.rs #[cfg(not(test))] #[no_mangle] pub extern "C" fn _start() -> ! { […] // initialize GDT, IDT, PICS use blog_os::memory::{self, translate_addr}; const LEVEL_4_TABLE_ADDR: usize = 0o_177777_777_777_777_777_0000; let recursive_page_table = unsafe { memory::init(LEVEL_4_TABLE_ADDR) }; // the identity-mapped vga buffer page println!("0xb8000 -> {:?}", translate_addr(0xb8000, &recursive_page_table)); // some code page println!("0x20010a -> {:?}", translate_addr(0x20010a, &recursive_page_table)); // some stack page println!("0x57ac001ffe48 -> {:?}", translate_addr(0x57ac001ffe48, &recursive_page_table)); println!("It did not crash!"); blog_os::hlt_loop(); } Now, instead of passing

LEVEL_4_TABLE_ADDR to translate_addr and accessing page tables via unsafe raw pointers, we pass references to the RecursivePageTable type. Thus, we now have a safe abstraction and clear semantics of ownership. This ensures that we cannot accidentally change the page table in shared access, because to change it we need to take possession of the RecursivePageTable exclusively.This function gives the same result as the hand-written original translation function.

Making Unsafe Functions Safer

memory::initis an insecure function: a block is required to call it unsafe, because the caller must ensure that certain requirements are met. In our case, the requirement is that the transmitted address is exactly matched to the physical frame of the table of the level 4 pages.The block

unsafecontains the whole body of the unsafe function so that all types of operations are performed without creating additional blocks unsafe. Therefore, we do not need an insecure block for dereferencing level_4_table_ptr: pub unsafe fn init(level_4_table_addr: usize) -> RecursivePageTable<'static> { let level_4_table_ptr = level_4_table_addr as *mut PageTable; let level_4_table = &mut *level_4_table_ptr; // <- this operation is unsafe RecursivePageTable::new(level_4_table).unwrap() } The problem is that we do not immediately see which parts are unsafe. For example, without looking at the definition of a function,

RecursivePageTable::new we cannot say whether it is safe or not. It is so easy to accidentally skip some unsafe code.To avoid this problem, you can add a safe built-in function:

// in src/memory.rs pub unsafe fn init(level_4_table_addr: usize) -> RecursivePageTable<'static> { /// Rust currently treats the whole body of unsafe functions as an unsafe /// block, which makes it difficult to see which operations are unsafe. To /// limit the scope of unsafe we use a safe inner function. fn init_inner(level_4_table_addr: usize) -> RecursivePageTable<'static> { let level_4_table_ptr = level_4_table_addr as *mut PageTable; let level_4_table = unsafe { &mut *level_4_table_ptr }; RecursivePageTable::new(level_4_table).unwrap() } init_inner(level_4_table_addr) } Now the block is

unsafeagain required for dereference level_4_table_ptr, and we immediately see that these are the only unsafe operations. Currently, Rust has an RFC open for modifying this unfortunate feature of unsafe functions.Create a new mapping

When we read the page tables and created the transform function, the next step is to create a new mapping in the page table hierarchy.

The complexity of this operation depends on the virtual page that we want to display. In the simplest case, a level-1 page table already exists for this page, and we just need to make one entry. In the most difficult case, the page is in a memory area for which level 3 does not exist yet, so you must first create new tables of level 3, level 2 and level 1

Let's start with a simple case when you do not need to create new tables. The loader is loaded into the first megabyte of the virtual address space, so we know that there is a valid level 1 table for this region. For our example, we can choose any unused page in this memory area, for example, the page at the address

0x1000. As the desired frame, use the 0xb8000VGA text buffer frame. So easy to check how our address translation works.We implement it in a new function

create_mapingin the module memory: // in src/memory.rs use x86_64::structures::paging::{FrameAllocator, PhysFrame, Size4KiB}; pub fn create_example_mapping( recursive_page_table: &mut RecursivePageTable, frame_allocator: &mut impl FrameAllocator<Size4KiB>, ) { use x86_64::structures::paging::PageTableFlags as Flags; let page: Page = Page::containing_address(VirtAddr::new(0x1000)); let frame = PhysFrame::containing_address(PhysAddr::new(0xb8000)); let flags = Flags::PRESENT | Flags::WRITABLE; let map_to_result = unsafe { recursive_page_table.map_to(page, frame, flags, frame_allocator) }; map_to_result.expect("map_to failed").flush(); } The function accepts a variable reference to

RecursivePageTable(it will change it) and FrameAllocator, which is explained below. Then it applies the function map_toin Tray Mapperto map the page to the address 0x1000with the physical frame to the address 0xb8000. The function is insecure, as it is possible to violate the memory security with invalid arguments.In addition to the arguments

pageand frame, the function map_totakes two more arguments. The third argument is a set of flags for the page table. We set the flag PRESENTrequired for all valid entries, and the flag WRITABLEfor recording.The fourth argument must be some structure that implements the treit

FrameAllocator. This argument is needed by the method.map_to, because creating new page tables may require unused frames. The implementation requires the argument trait Size4KiB, as types Pageand PhysFrameare universal for the trait PageSize, working with standard 4 KiB pages and with enormous pages 2 MiB / 1 GiB.The function

map_tomay fail, therefore returns Result. Since this is just an example of code that should not be reliable, we simply use it expectwith a panic when an error occurs. If successful, the function returns a type MapperFlushthat provides an easy way to clear the newly matched page from the associative translation buffer (TLB) method flush. LikeResult, the type uses the attribute #[must_use]and issues a warning if we accidentally forget to use it.Since we know that the address

0x1000does not require new page tables, it FrameAllocatorcan always return None. To test the function, create the following EmptyFrameAllocator: // in src/memory.rs /// A FrameAllocator that always returns `None`. pub struct EmptyFrameAllocator; impl FrameAllocator<Size4KiB> for EmptyFrameAllocator { fn allocate_frame(&mut self) -> Option<PhysFrame> { None } } (If the error 'method

allocate_frameis not a member of trait FrameAllocator' appears , you need to upgrade x86_64to version 0.4.0.)Now we can test the new translation function:

// in src/main.rs #[cfg(not(test))] #[no_mangle] pub extern "C" fn _start() -> ! { […] // initialize GDT, IDT, PICS use blog_os::memory::{create_example_mapping, EmptyFrameAllocator}; const LEVEL_4_TABLE_ADDR: usize = 0o_177777_777_777_777_777_0000; let mut recursive_page_table = unsafe { memory::init(LEVEL_4_TABLE_ADDR) }; create_example_mapping(&mut recursive_page_table, &mut EmptyFrameAllocator); unsafe { (0x1900 as *mut u64).write_volatile(0xf021f077f065f04e)}; println!("It did not crash!"); blog_os::hlt_loop(); } First we create a mapping for the page by address

0x1000, calling the function create_example_mappingwith a variable reference to the instance RecursivePageTable. This translates the page 0x1000into a VGA text buffer, so we will see some result on the screen.Then we write down the value in this page

0xf021f077f065f04e, which corresponds to the string “New!” On a white background. Just do not need to write this value immediately to the top of the page 0x1000, because the top line will move next from the screen println, and we will write it at the offset 0x900, which is approximately in the middle of the screen. As we know from the VGA Text Mode article , writing to the VGA buffer should be volatile, so we use the method write_volatile.When we run it in QEMU, we see this:

The inscription on the screen.

The code worked because there was already a level 1 table to display the page

0x1000. If we try to translate a page for which such a table does not yet exist, the function map_towill return an error, because it will try to allocate frames from to create new page tables EmptyFrameAllocator. We will see this if we try to translate the page 0xdeadbeaf000instead 0x1000: // in src/memory.rs pub fn create_example_mapping(…) { […] let page: Page = Page::containing_address(VirtAddr::new(0xdeadbeaf000)); […] } // in src/main.rs #[no_mangle] pub extern "C" fn _start() -> ! { […] unsafe { (0xdeadbeaf900 as *mut u64).write_volatile(0xf021f077f065f04e)}; […] } When you start it starts panicking with the following error message:

panicked at 'map_to failed: FrameAllocationFailed', .../result.rs:999zh

To display pages that do not yet have a Level 1 page table, you need to create the correct one

FrameAllocator. But how do you know which frames are free and how much physical memory is available?Boot information

On different computers, different amounts of physical memory and different areas reserved by devices, such as VGA. Only the BIOS or UEFI firmware knows exactly which memory areas can be used and which are reserved. Both firmware standards provide functions for obtaining a memory map, but they can only be called at the very beginning of the download. Therefore, our bootloader has already requested this (and other) information from the BIOS.

To pass information to the OS kernel, the loader as an argument when calling a function

_startgives a link to the information structure of the boot. Add this argument to our function: // in src/main.rs use bootloader::bootinfo::BootInfo; #[cfg(not(test))] #[no_mangle] pub extern "C" fn _start(boot_info: &'static BootInfo) -> ! { // new argument […] } The structure is

BootInfostill being finalized, so do not be surprised at the failures when upgrading to future versions of the bootloader that are not compatible with semver . He currently has three fields p4_table_addr, memory_mapand package:- The field

p4_table_addrcontains the recursive virtual address of the level 4 page table. Due to this, it is not necessary to prescribe the address strictly0o_177777_777_777_777_777_0000. - This field

memory_mapis of the most interest because it contains a list of all memory areas and their type (unused, reserved or others). - The field

packageis the current function for associating additional data with the loader. The implementation is not completed, so we can ignore it for now.

Before using the field

memory_mapto create the correct one FrameAllocator, we want to ensure the correct type of argument boot_info.Macro entry_point

Because it

_startis called externally, the function’s signature is not verified. This means that arbitrary arguments will not lead to compilation errors, but may cause failure or undefined behavior at run time.To verify the signature, the crate

bootloaderuses the entry_pointtype-tested macro to define the Rust function as an entry point . Let's rewrite our function under this macro: // in src/main.rs use bootloader::{bootinfo::BootInfo, entry_point}; entry_point!(kernel_main); #[cfg(not(test))] fn kernel_main(boot_info: &'static BootInfo) -> ! { […] // initialize GDT, IDT, PICS let mut recursive_page_table = unsafe { memory::init(boot_info.p4_table_addr as usize) }; […] // create and test example mapping println!("It did not crash!"); blog_os::hlt_loop(); } For the entry point, you no longer need to use

extern "C"or no_mangle, since the macro sets a real low-level entry point _start. The function has kernel_mainnow become a completely normal Rust function, so we can choose an arbitrary name for it. It is important that it is already typed, so a compilation error will occur if you change the signature of the function, for example, by adding an argument or changing its type.Notice that we are now sending to a

memory::initnon- hardcoded address as well boot_info.p4_table_addr. Thus, the code will work even if the future version of the loader chooses another level 4 page table entry for the recursive display.Frame allocation

Now, thanks to the information from the BIOS, we have access to the memory map, so we can make a normal frame allocator. Let's start with a common skeleton:

// in src/memory.rs pub struct BootInfoFrameAllocator<I> where I: Iterator<Item = PhysFrame> { frames: I, } impl<I> FrameAllocator<Size4KiB> for BootInfoFrameAllocator<I> where I: Iterator<Item = PhysFrame> { fn allocate_frame(&mut self) -> Option<PhysFrame> { self.frames.next() } } The field is

framesinitialized with an arbitrary frame iterator . This allows you to simply delegate calls allocto the Iterator :: next method .Initialization

BootInfoFrameAllocatoroccurs in a new function init_frame_allocator: // in src/memory.rs use bootloader::bootinfo::{MemoryMap, MemoryRegionType}; /// Create a FrameAllocator from the passed memory map pub fn init_frame_allocator( memory_map: &'static MemoryMap, ) -> BootInfoFrameAllocator<impl Iterator<Item = PhysFrame>> { // get usable regions from memory map let regions = memory_map .iter() .filter(|r| r.region_type == MemoryRegionType::Usable); // map each region to its address range let addr_ranges = regions.map(|r| r.range.start_addr()..r.range.end_addr()); // transform to an iterator of frame start addresses let frame_addresses = addr_ranges.flat_map(|r| r.into_iter().step_by(4096)); // create `PhysFrame` types from the start addresses let frames = frame_addresses.map(|addr| { PhysFrame::containing_address(PhysAddr::new(addr)) }); BootInfoFrameAllocator { frames } } This function using the combinator converts the original memory map into an iterator of the physical frames used:

- Сначала вызываем метод

iterдля преобразования карты распределения памяти в итераторMemoryRegion. Затем используем методfilter, чтобы пропустить зарезервированные или недоступные регионы. Загрузчик обновляет карту распределения памяти для всех созданных им сопоставлений, поэтому фреймы, используемые нашим ядром (код, данные или стек) или для хранения загрузочной информации, уже помечены какInUseили аналогично. Таким образом, мы можем быть уверены, что используемые фреймы не используются где-то ещё. - На втором этапе используем комбинатор

mapи синтаксис range Rust для преобразования нашего итератора областей памяти в итератор диапазонов адресов. - The third step is the most difficult: we transform each range into an iterator using the method

into_iter, and then select each 4096th address withstep_by. Since the page size is 4096 bytes (4 KiB), we get the start address of each frame. The bootloader page aligns all used memory areas, so we do not need an alignment or rounding code. Replacingmaponflat_map, we getIterator<Item = u64>insteadIterator<Item = Iterator<Item = u64>>. - At the final stage, we convert initial addresses into types

PhysFramein order to construct the required oneIterator<Item = PhysFrame>. Then use this iterator to create and return a new oneBootInfoFrameAllocator.

Now we can change our function

kernel_mainto pass an instance BootInfoFrameAllocatorinstead of EmptyFrameAllocator: // in src/main.rs #[cfg(not(test))] fn kernel_main(boot_info: &'static BootInfo) -> ! { […] // initialize GDT, IDT, PICS use x86_64::structures::paging::{PageTable, RecursivePageTable}; let mut recursive_page_table = unsafe { memory::init(boot_info.p4_table_addr as usize) }; // new let mut frame_allocator = memory::init_frame_allocator(&boot_info.memory_map); blog_os::memory::create_mapping(&mut recursive_page_table, &mut frame_allocator); unsafe { (0xdeadbeaf900 as *mut u64).write_volatile(0xf021f077f065f04e)}; println!("It did not crash!"); blog_os::hlt_loop(); } Now the address translation was successful - and we again see the black and white message “New!” On the screen . Behind the scenes, the method

map_tocreates the missing page tables as follows:- Selects an unused frame from

frame_allocator. - Matches the top-level table entry to this frame. Now the frame is available through a recursive page table.

- Zeroes the frame to create a new, empty page table.

- Moves to the next level table.

Although our function

create_mapingis just an example, we can now create new mappings for arbitrary pages. This is very useful when allocating memory and implementing multithreading in future articles.Summary

In this article, you learned how to use a recursive level 4 table to translate all frames into computable virtual addresses. We used this method to implement the address translation function and create a new mapping in the page tables.

We saw that creating new mappings requires unused frames for new tables. Such a frame allocator can be implemented based on information from the BIOS, which the loader sends to our kernel.

What's next

In the next article, we will create a heap memory for our kernel, which will allow us to allocate memory and use different types of collections .

Source: https://habr.com/ru/post/439066/